you suck at GPT4 because you don't know what your X is

please stop insulting the machine god with Y questions

You excitedly call a friend of yours. You tell him about GPT-4 - a near godlike QA system. He tries 3.5 out a little bit, and it spits out a bunch of text at him. “Oh I see, It's like Google Assistant!”, he says.

Wait, why isn't he excited? Is he not seeing what you’re seeing?

“Garbage in, garbage out.”, my middle school mathematics teacher would say, as he helped the students with their calculators. The kids would mess up the syntactic order of operations. The set of symbols they input did not match the problem. The calculator's output is correct, but the answer is wrong!

The original sin, then, was asking the machine the wrong question.

The X/Y problem

Anyone who has worked in support is familiar with the XY problem. Support agents spend most of their time teaching users what their problem really is.

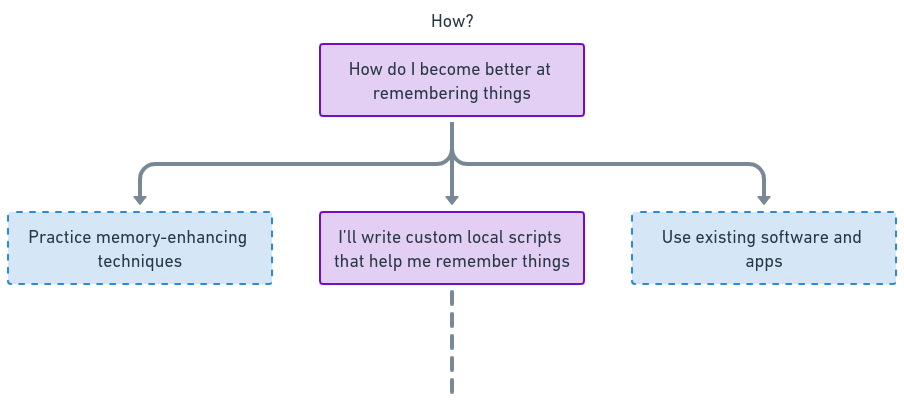

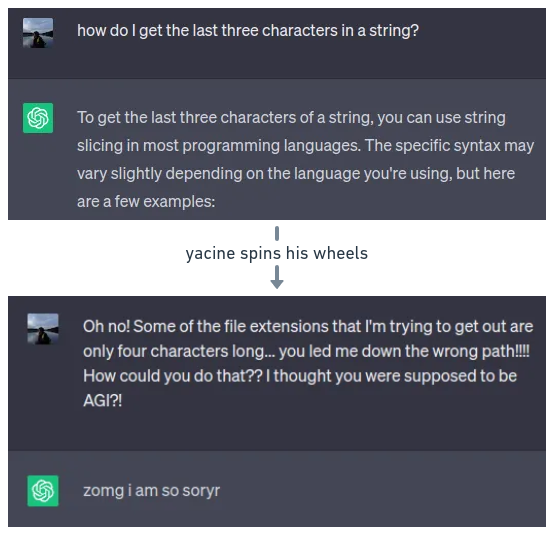

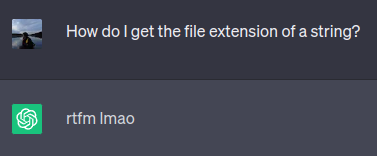

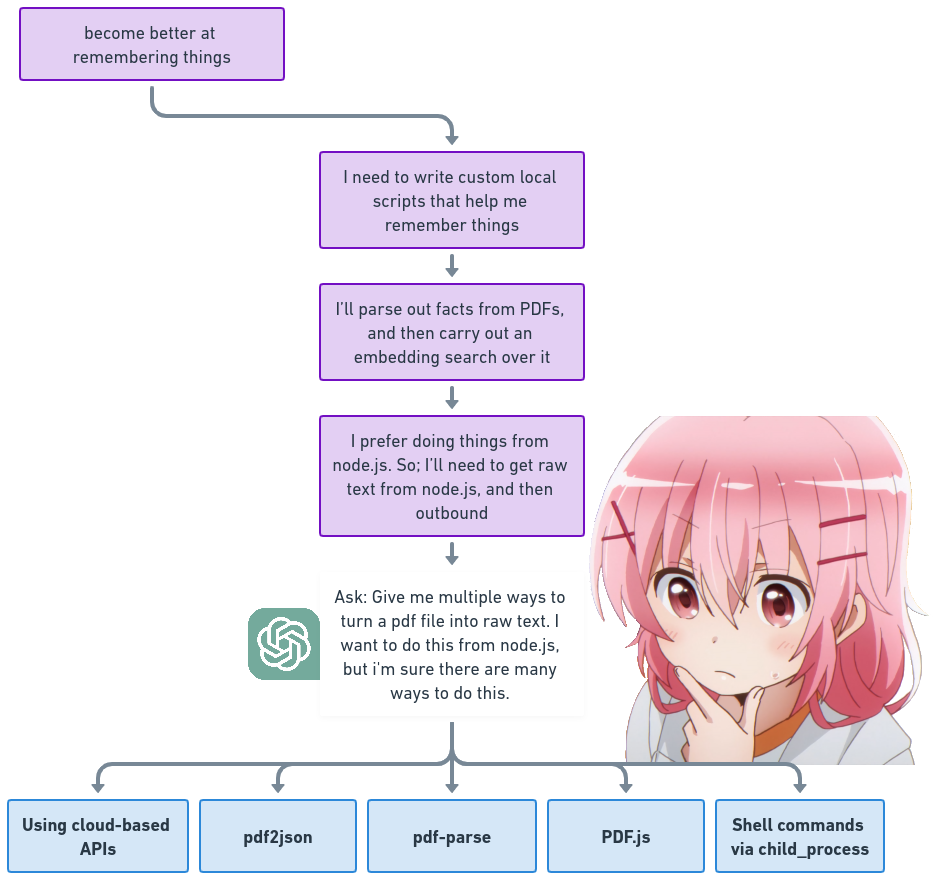

Users don't even want to ask questions! They’d would much rather figure it out themselves than deal with human inference latency. So, to solve problem X, they'll first attempt a string of actions. And then, at some point, they'll be stuck on one of the actions. They’ll then come to you, and ask: "How do I do Y?"

You'll spin your wheels with the user, helping them do Y. But in reality, Y is a suboptimal solution.

We are the users. And we keep on insulting our godlike LLM QA systems with Y questions.

Asking gooder questions

Non nerd version at bottom

Let a question's “goodness” be the likelihood that the answer provided solves for some meta goal.

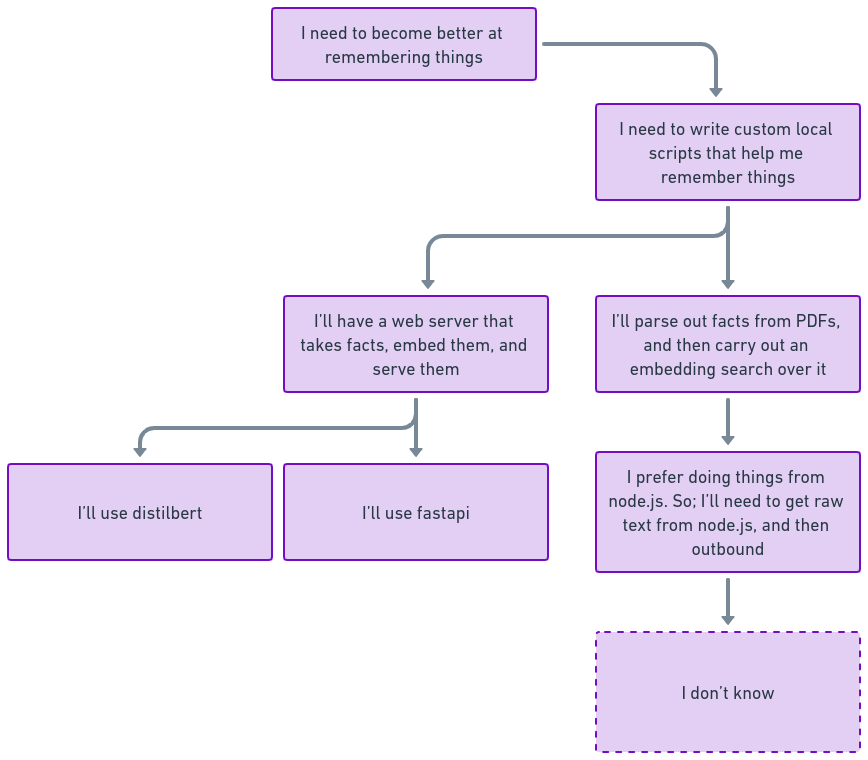

A meta goal isn’t what you are currently trying to do to reach some goal. It is the highest level goal that you are trying to serve. A meta goal is served by a set of actions, and those actions are goals in of themselves. An action, being a goal, can be composed of smaller actions still. This forms a tree.

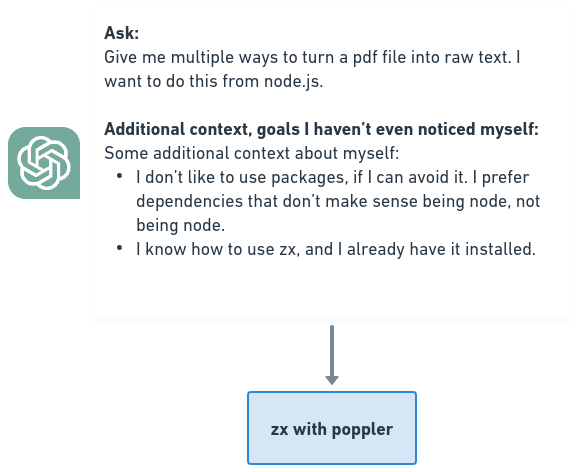

To answer the question, "I don't know", the entire context should be provided! This, in effect, helps constrain the space of possible next actions, which then increases the likelihood that the answer will serve our meta goal.

We got a bunch of answers from GPT4. But, to be frank, many of the options suck! But why do they suck? It’s likely that they suck because there's some part of X that you haven't noticed.

In this case, GPT-4 doesn't know about my tendency to avoid unreviewed mystery meat packages. In fact, I only remembered this preference when I read the answer. To solve for this, I can update my X, and give it back to the LLM!

Humans are awful at knowing what their X is. The good news, however, is that GPT-4 can function as an X surfing tool! For example, you can take your chosen path from the root of the tree, and then feed it into the LLM to find out the different paths you could have taken.

Asking gooder questions (non nerd version)

you gotta know yourself to know what you want, to ask the right question, which gets you what you really want, and sometimes, it takes time to find out what you really want, and there’s no way a GPU could know what you really want, if it doesn’t know anything about who you really are

Thanks for reading!

raw notes at yacine.ca

Are my notes/blog posts useful to you? Throw me a one time ⬆money upvote!⬆

adding this to my "good articles" folder -- nice job man

setting this post as my wallpaper i need to read it every day